The Million-Token Mirage: A Micro-Modular Framework for AI Coding

By Timothy Mo ·

Massive AI context windows degrade reasoning and introduce silent code regressions when treated as infinite storage. Engineers must cap working context at 30 percent and enforce strict session hygiene using handover notes to maintain architectural continuity.

An engineer at a mid-sized fintech highlights their entire backend directory—roughly 50 files, 40,000 lines of Go—and pastes it into an AI coding session. The model has a million-token context window, so there’s plenty of room. Thirty minutes later, they’re staring at a confidently rewritten auth middleware that silently drops an RBAC check. The hallucination isn’t in a corner case. It’s in the critical path. It reaches production before QA catches it two days later.

This is the million-token mirage. The context window is not a workspace. It’s a cognitive trap. Engineering managers who let teams treat it as infinite storage are shipping technical debt with every AI-assisted PR.

The Silent Degradation Curve

The fundamental misunderstanding is conflating accepted tokens with actively reasoned tokens. An LLM accepting a million-token input does not reason uniformly over all of it. Long-context testing returns the same result consistently: recall and reasoning quality degrade for information buried in the middle and tail of the window—documented in the literature as the “lost in the middle” problem. And the degradation isn’t a smooth fade. Material in the middle of a long context window is disproportionately poorly recalled; the beginning and end fare measurably better.

The practical implication for engineering managers: treat 30 percent of the stated context limit as a working ceiling for any active coding session. A model marketed at 200,000 tokens should carry no more than 60,000 tokens in the window during complex coding work. Push past that threshold and you’re not seeing marginal quality reduction—you’re accepting real risk of dropped variables, hallucinated function signatures, and architectural regressions the model introduces without flagging.

This is an engineering constraint, the same way you don’t run a database at 90 percent CPU and expect query latency to hold. You set margins. You operate inside them.

Disabling the Auto-Context Autopilot

Popular AI coding tools are optimized to feel powerful, not to perform reliably. Default codebase indexing in most IDE integrations will scrape your entire repository and inject it into context. The tool presents this as intelligence. In practice, it’s a reliable way to saturate a session before the second prompt.

Engineers who let automated retrieval decide what goes into context have handed over the one decision that most directly controls output quality. The context window is one of the most consequential configuration decisions in an AI coding session—leaving it on autopilot is equivalent to letting the ORM decide your database indexes.

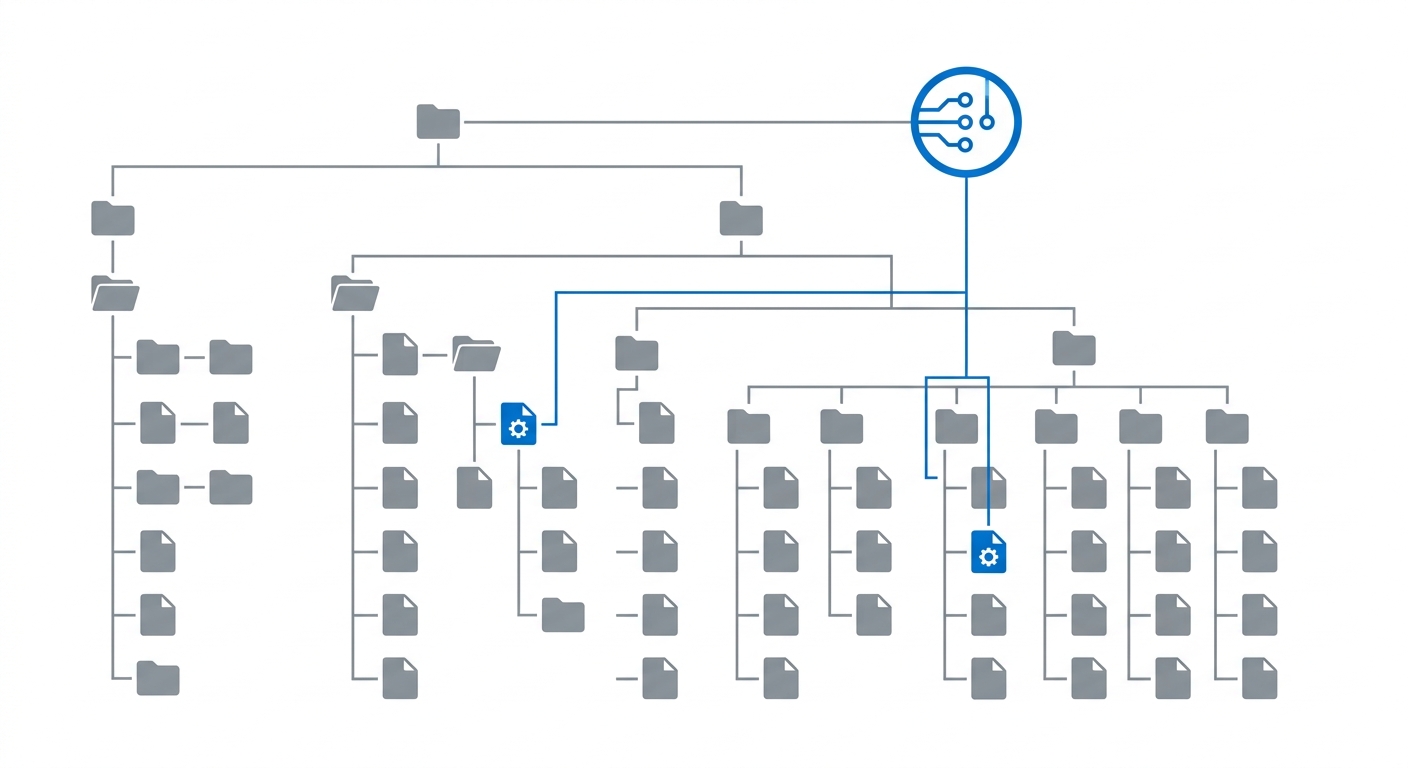

The discipline is simple: engineers must explicitly select the two or three files strictly necessary for the immediate micro-task. Not the whole module. Not the related utilities folder. The interface file and the target class. This requires that the engineer has already planned what they’re building before opening the AI session—which is the correct order of operations.

The Micro-Modular Session Lifecycle

Done correctly, the session lifecycle looks like this.

Plan before you prompt. Break the feature into micro-modular components that can be reasoned about in isolation. A new payment webhook handler is not one task. It is: define the interface, implement the parser, implement the validator, wire the service, write the tests. Each is a discrete session.

Execute with a focused context. Attach only the files relevant to the current micro-task. Prompt specifically. Get the output. Review it. Run the compile check or unit tests.

Then close the session. Not pause it—close it. Once a small task is verified, you’re done. The instinct to keep going—“okay, now can you also add the retry logic…”—is exactly how context bloats past the working ceiling and quality collapses.

This is the hardest behavioral change to enforce. Chat history feels like continuity. It is actually liability. Every prior debugging iteration, every dead-end approach, every discarded snippet sitting in that history competes for the model’s attention on the next task. Clear it.

Bridging Sessions: The Handover Note Protocol

Clearing sessions creates an obvious concern: how does the LLM maintain architectural continuity across a feature that spans twelve micro-tasks? The answer is the Handover Note—a structured summary generated by the model at the end of each session, before clearing, that bootstraps the next one.

This is the prompt template engineering managers should distribute to their teams:

[System: Handover Protocol]

You have successfully completed this micro-task. Before we clear this session,

generate a strict 'Handover Note' for the next LLM session.

Include exactly three sections:

1. STATE: What was just implemented and verified (briefly).

2. ARCHITECTURE CONTEXT: Any global variables, dependencies, or rigid patterns

established that the next session must respect.

3. NEXT STEP: The exact technical objective of the upcoming task.

Output in concise markdown. Do not include code blocks unless strictly necessary

for exact syntax matching.The output is a dense 150–300 word document. It costs almost nothing. To resume: open a fresh session, paste the Handover Note, attach the files for the next micro-task, proceed. The new session starts with a full context budget, a clean attention window, and precise architectural continuity.

The Handover Note is not a workaround for a temporary limitation. It is the correct abstraction for managing stateful, multi-step construction with a stateless reasoning engine. Within a session, the model carries no state beyond context—you are the continuity layer. The Handover Note is your API contract between sessions.

The Economics of the 20 Percent Overhead

Here’s the objection that comes up every time: generating and reading Handover Notes wastes tokens and slows velocity.

The overhead is real. Generating a Handover Note, storing it, and injecting it into the next session adds roughly 20 percent more tokens compared to a continuous single-session chat covering the same scope—illustratively, a feature that would otherwise consume 500,000 tokens in one long session costs an additional ~100,000 tokens in overhead.

Now do the other side of the ledger.

A context-saturated session generating a hallucinated architectural regression can cost days to diagnose and remediate—and that assumes QA catches it before release. Silent logical errors caused by dropped variables in long-context sessions are, by their nature, hard to spot in review. Rework cycles compound. The debugging conversations that follow are themselves new long-context sessions, often repeating the same degradation problem.

A 20 percent token overhead is among the cheapest quality guarantees available in AI-assisted engineering. You are paying 20 percent more in compute costs in exchange for sessions that operate inside their reliable performance envelope on every task. Refusing it to save on API costs while absorbing unpredictable rework is not engineering discipline. It is false economy.

Context Engineering as a Permanent Discipline

The engineers building reliable AI-assisted codebases are not the ones who learned to prompt well. They are the ones who learned to manage session state well.

Just as mature engineering practice means understanding memory allocation, dependency boundaries, and abstraction layers, AI-assisted development matures when engineers treat context as a managed resource. The million-token window is not a gift. It is a trap for the undisciplined.

Thirty percent is your working ceiling. Handover Notes are your state management layer. Micro-modular sessions are your architecture.

From what I’ve seen across deployments, this discipline doesn’t become irrelevant as models scale either. The underlying challenge—maintaining reliable attention across long, complex sequences—persists even as context windows grow, and the structural habit of scoping work tightly before prompting remains sound regardless of model capability. Teams that treat context as an afterthought ship subtle regressions disguised as features. Teams that enforce context hygiene gain something rarer: an accelerant that doesn’t quietly fail them at the worst possible moment.