The Memory Bottleneck: Why Your Curator Agent Dictates AI Success

Massive context windows aren't working memory—they are unsearchable junk drawers that degrade agent reasoning. To prevent context collapse, multi-agent systems require a dedicated Curator agent to actively filter, deduplicate, and synthesize information.

Throwing two million tokens into a context window isn’t memory—it’s a filing cabinet you’ve set on fire.

The industry has become captivated by raw context length. The pitch is seductive: bigger windows mean your agent “remembers” everything. Ship a one-million-token window, stuff it with every document, log, and transcript you own, and let the model sort it out.

Massive context is genuinely useful for one-shot synthesis of long documents. But as a substitute for dynamic memory, it’s a fundamentally flawed architecture—and the flaw isn’t subtle. Language models don’t sort out raw, unstructured context natively. Not reliably. Not cheaply.

The Illusion of Infinite Context

The breakdown starts with how transformer attention actually processes data. As a sequence grows, the attention weights—which calculate pairwise relevance between tokens—become increasingly diluted.

Recall collapses in the middle. Liu et al. (2024) demonstrated that model performance is highest when relevant information appears at the beginning or end of the input context, and significantly degrades when that information sits in the middle of long contexts—a pattern that held even for models explicitly designed for long-context tasks (Liu et al., 2024). That’s a structural constraint, not a temporary bug. A one-million-token window does not guarantee one million tokens of uniformly usable working memory. It gives you a U-shaped attention curve with a pronounced attention trough at its center whose depth varies by architecture, prompt structure, and task (Liu et al., 2024). Architects who treat context length as an infinitely expanding memory budget are building on a measurement that functionally does not exist.

The compute math is equally punishing. Transformer attention scales quadratically with sequence length—double the context, quadruple the attention computation, rapidly exhausting KV (Key-Value) cache limits and spiking latency. Research on dynamic token pruning makes the slack in typical long-context requests visible: SlimInfer achieves up to 2.53× time-to-first-token (TTFT) speedup and 1.88× end-to-end latency reduction on LLaMA3.1-8B-Instruct on a single RTX 4090, purely by removing tokens that don’t affect the output (Long et al., 2025). That isn’t exotic re-architecture. It’s pruning away the noise that context-stuffing introduces as a matter of course. Aggressive pruning carries its own risks—strip out the wrong contextual signals and you can quietly derail subtle reasoning chains—but the latency cost you’re paying without it compounds with every agent call in your pipeline.

The industry’s default answer to both the recall and latency problems has been naive vector-search RAG (Retrieval-Augmented Generation): retrieve the top-k semantically similar chunks, prepend them to the prompt, and assume downstream reasoning handles the rest. Frequently, it doesn’t. Semantic similarity is not the same as contextual relevance. A chunk that scores high on cosine distance may be entirely accurate in isolation yet worse than useless in context—a paragraph from a superseded document version, a definition that matches the surface query but contradicts the agent’s current decision branch, or a data point stripped of the temporal conditions that made it actionable.

Papadimitriou et al. (2024) compared naive vector search against hybrid vector-keyword retrieval and found that hybrid search achieved a 72.7% pass rate on a multi-metric evaluation framework, with naive retrieval consistently underperforming on complex, multi-step queries (Papadimitriou et al., 2024). Retrieving accurate chunks without synthesizing their broader context often fails to feed reasoning. It generates confident-sounding noise that misleads the agents consuming it.

More structurally rigorous approaches—like decoupling KV cache and attention computation into a dedicated vector database layer (Deng et al., 2025)—acknowledge that retrieval and inference are separate problems requiring separate engineering. But even those systems optimize the plumbing. They don’t address the upstream cognitive question: what should be retrieved, when, and why?

That question belongs to an architectural layer most teams haven’t built yet: the Curator.

Why State Management Breaks Agentic Loops

Memory is a severe architectural constraint. But to fix it, you first need to understand exactly how the default state management breaks multi-agent pipelines.

Human episodic memory is autobiographical: a continuous, selectively retrievable record of past events, preferences, and decisions (Sarin et al., 2025). We organically consolidate memories, discarding irrelevant details while retaining actionable heuristics. LLMs have none of this natively. “Traditional LLM deployments typically follow a stateless architecture in which each user input is processed independently, with prior interactions forgotten unless explicitly provided as input context” (Sarin et al., 2025).

Every invocation starts with a blank slate. The model carries no inherent record of what it attempted three steps ago, what constraints it already resolved, or which branch of a plan previously collapsed. Episodic memory—the kind that “mirrors the autobiographical memory of humans, allowing the model to reference details such as user preferences, prior conversations, and historical decisions” (Sarin et al., 2025)—must be actively engineered in from the outside.

Multi-agent frameworks like ReAct and Plan-and-Solve address this statelessness by embedding reasoning traces directly into the prompt: the chain of thought becomes the memory. It works at small scale. It collapses structurally as interaction loops extend. “In ReAct-style agents, explicit reasoning traces themselves become part of the context, enabling interpretability and complex reasoning but simultaneously introducing a substantial and persistent contextual burden” (Huang et al., 2026).

Picture a coding agent debugging a script. It runs a test, hits a Python traceback, writes that raw error to context, attempts a fix, hits a new traceback. Each loop iteration appends more raw data. Tool outputs pile on—web search results, database query responses, and intermediate agent outputs “can also accumulate dramatically” (Huang et al., 2026). Within a handful of cycles, the context window stops functioning as agile working memory and degrades into an undifferentiated pile of prior state.

This is the junk drawer problem. Execution loops writing unedited logs, failed plan branches, and intermediate scratch work directly into a vector database degrade retrieval quality with every new entry. The system no longer surfaces relevant context. It fishes through its own noise.

Bousetouane (Bousetouane, 2026) frames the consequence directly: without deliberate design, “agent behavior often degrades due to loss of constraint focus, error accumulation, and memory-induced drift.” Stable long-horizon behavior requires a system that “preserves a stable signal to noise ratio as the interaction horizon grows” (Bousetouane, 2026)—a property that passive accumulation fundamentally cannot provide.

This compounding degradation is measurable. Yang et al. tested how injecting irrelevant context degrades mathematical reasoning on the GSM8K benchmark. Adding a single distractor sentence produced notable accuracy reductions (Yang et al., 2025). At a fixed reasoning depth of $r_s = 5$, Grok-3-Beta’s step accuracy dropped from 43% with one irrelevant context item to just 19% under fifteen (Yang et al., 2025)—a 24-percentage-point collapse driven entirely by informational noise, not by inherent problem complexity or model capacity limits.

Every piece of irrelevant state permitted into the prompt becomes a tax on every subsequent reasoning step. That tax compounds. Which means the write path into an agent’s memory is just as consequential as the read path—and that’s the core architectural challenge: determining what actually gets written, and how.

The Curator: Your System’s Cognitive Filter

Unmanaged memory breaks multi-agent pipelines at scale. A larger context window doesn’t solve it—it defers the collapse. The durable fix is deploying a dedicated agent whose entire operational purpose is to control what flows into and out of memory.

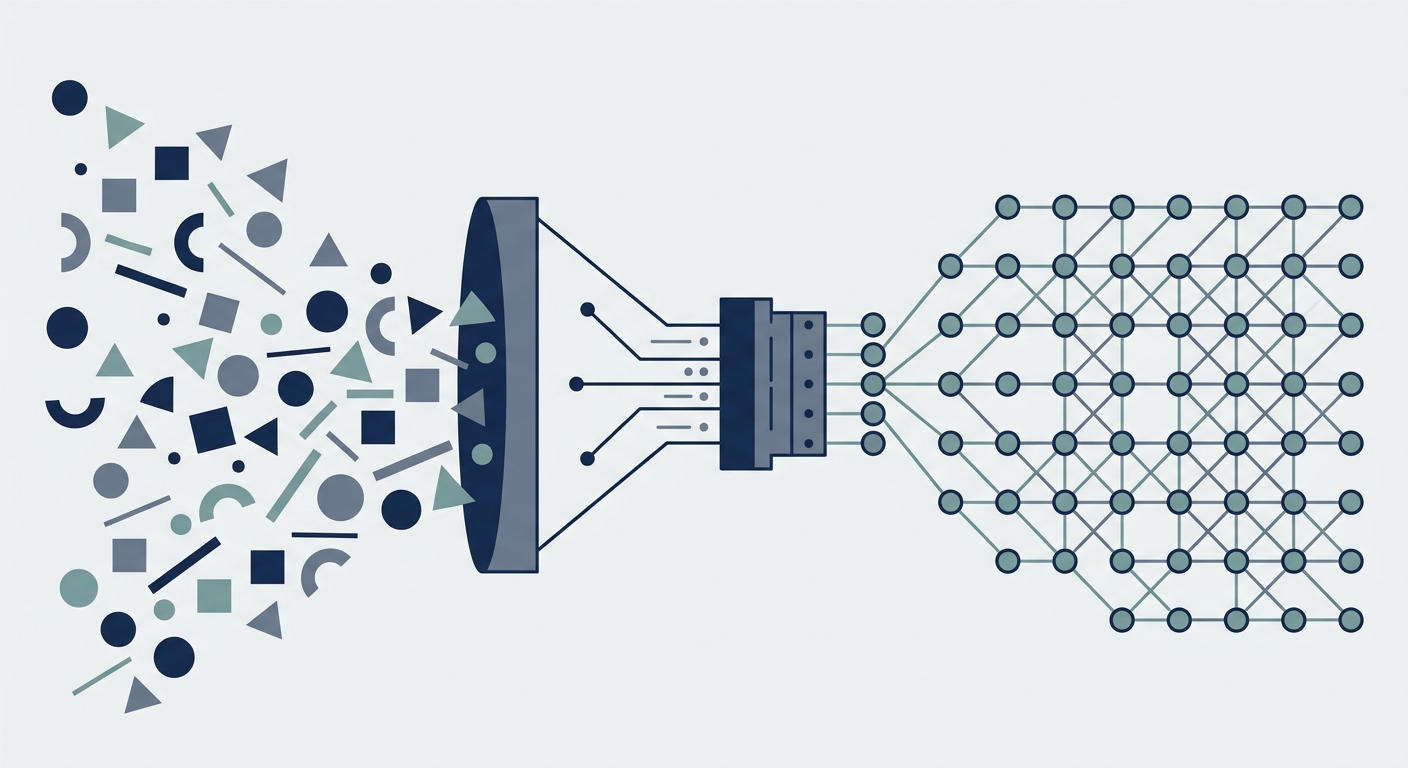

Call it the Curator Agent. Its role is singular: act as the cognitive filter between raw information and the working memory of every other agent in the system. Most architectures today skip this layer entirely, relying on passive similarity searches that dump top-k chunks into the prompt and hope the model parses the pile correctly. The Curator does something categorically different: it synthesizes, prunes, and routes information as an active decision-maker rather than a dumb index.

Yes, this adds cost. Intercepting the write path requires an additional LLM call—latency and compute overhead on every operational cycle. For a simple single-turn Q&A bot, a Curator is overkill. For autonomous agents running complex, long-horizon workflows, that overhead pays for itself by preventing catastrophic context collapse.

The Write Path

Every time a worker agent completes a task, it produces output—tool calls, intermediate reasoning steps, raw database returns. Without a Curator, that output gets appended blindly to a vector store, accumulating indefinitely. The Curator intercepts this flow.

Its first write responsibility is deduplication. In a multi-agent system processing a live event stream, the same entity, fact, or error appears dozens of times in slightly different syntactic forms. Writing all of them dilutes the vector space. A recent preprint reports that on a dataset of 13,000 issues and 120,000 events, deduplication-based consolidation achieved 97.2% retention precision while cutting memory store size by 58%—21.8 percentage points better than the baseline (Kerestecioglu et al., 2026). That isn’t a marginal infrastructure gain. It’s the difference between a knowledge store that scales and one that collapses under its own redundancy.

The second write responsibility is summarization and graph updating. Rather than appending raw agent output, the Curator condenses completed tasks into structured summaries and writes updated entity relationships into a central knowledge graph. The store stays semantically dense rather than syntactically bloated. Summarization is intentionally lossy—the goal is a compact semantic representation, not a verbatim transcript. Some granular detail is inevitably sacrificed, but careful tuning of the summarization prompt preserves actionable constraints and critical state changes.

The Read Path

On the read side, the Curator behaves like a specialized reference librarian, not a generic search engine. When a worker agent submits a context request, the Curator queries across vector, graph, and relational stores, then synthesizes a concise brief tailored to that worker’s current task. The worker receives a curated, formatted packet—not a ranked list of disjointed chunks it must interpret on its own.

The empirical case for this decoupling is strengthening. Preprint evidence from Li et al. (2026) reports that active context curation lifted Gemini-3.0-flash’s success rate on the WebArena benchmark from 36.4% to 41.2% while simultaneously cutting token consumption 8.8%, from 47.4K to 43.3K tokens per task. On the more demanding DeepSearch benchmark, the same framework pushed success rates from 53.9% to 57.1% with token consumption cut by a factor of 8 (Li et al., 2026). A related preprint by Verma (2026) describes autonomous compression—where the Curator decides dynamically when to compress rather than following rigid pre-programmed thresholds—averaging 6.0 compressions per task, with individual instances reaching up to 57% token savings (Verma, 2026).

Across these benchmarks, the pattern holds: decouple context curation from task execution and hand it to a dedicated agent, and both reasoning quality and operational efficiency improve together. The perceived trade-off between factual recall and token budgeting largely dissolves.

What this means for system architecture—and how to build a Curator that doesn’t itself become an operational bottleneck—is where the real engineering decisions live.

Architecting the Active Memory Loop

Once you accept that the Curator is active infrastructure rather than a passive logger, the design question becomes concrete: what exactly is it managing, and in what structural shape?

The most robust answer is a three-tier memory hierarchy, modeled deliberately on the virtual-memory abstraction operating systems have used for decades: fast registers at the top, slow disk at the bottom, a background kernel process paging data transparently across layers.

Tier 1 — Working Memory is the scratchpad. Ephemeral, context-local, wiped between tasks. Individual agents write intermediate reasoning steps, partial tool outputs, and in-flight state variables here. Nothing persists beyond the current execution window. Working memory exists purely to keep Tier 2 clean—raw churn stays local, and only meaningful outcomes get promoted upward.

Tier 2 — Episodic Memory is the chronological audit trail. Every completed action, final decision, and validated observation is appended asynchronously—non-blocking, so it doesn’t tax the worker agent’s critical path. Left unmanaged, this log grows without bound. That’s the exact failure mode the Curator exists to prevent. On a scheduled cadence (hourly, nightly, or event-triggered at a configurable token threshold), the Curator compresses these episodic batches into narrative summaries—something like: “Between 14:00 and 18:00 SGT, the research agent evaluated twelve sources, rejected four for recency, and extracted the following validated claims.” The raw entries are then pruned.

Tier 3 — Semantic Memory is where durable, organizational intelligence lives. Rather than a flat vector store, this tier is best structured as a Knowledge Graph, where nodes represent concepts and edges encode typed relationships—contradicts, supports, supersedes, derived-from. The Curator is the sole writer; worker agents query it read-only.

The empirical rationale for this structure is strong. On complex, corpus-wide reasoning queries—the kind requiring multi-hop chains through dispersed evidence—GraphRAG achieved comprehensiveness win rates of 72–83% on podcast transcripts and 72–80% on news corpora against a naïve vector-retrieval baseline, with diversity win rates of 75–82% and 62–71% respectively (Edge et al., 2024). Even at the computationally cheaper root-level configuration, GraphRAG retained a 72% comprehensiveness win rate and a 62% diversity win rate over naïve RAG (Edge et al., 2024).

Building and maintaining a knowledge graph is expensive upfront—every ingested document requires an LLM pass to extract nodes and edges. But for agent workflows where a single retrieved hallucination or superseded fact can cascade into catastrophic downstream decisions, that comprehensiveness margin matters far more than initial ingestion latency.

The Curator’s relationship to all three tiers is asynchronous and background-oriented—closer to a garbage collector in a runtime environment than a synchronous API. It runs as a scheduled or event-driven process: reinforcing heavily accessed Tier 3 nodes (raising their retrieval weight), demoting stale nodes that haven’t been queried in a configurable window, and flagging logical contradictions between recently ingested Tier 2 summaries and existing Tier 3 assertions for automated or human resolution. Like a well-tuned GC, it’s invisible during normal operations. Its absence only becomes glaringly visible when memory bloats, retrieval accuracy degrades, or stale beliefs start corrupting downstream outputs.

This keeps agent context bounded and semantically coherent. But a bounded memory system is only as valuable as the proprietary state it accumulates over time—and on the operational decisions made before the first agent ships.

What this means in production

The architecture above describes what to build. The decisions below describe what to commit to before the first PR ships. None of these have universal answers—they’re calibrated against your domain’s noise tolerance, recall floor, and human-in-the-loop budget.

- What to log — Every tool call (arguments + status), every retrieval query (the result IDs returned, not the result bodies), every agent decision that diverges from the most recent successful trace. Episodic memory needs the trail, not the transcript.

- What not to log — Raw tool outputs beyond a token budget; don’t append a 4,000-token issue body to context just because the agent fetched it (the Curator summarizes on write). Anything containing PII unless your retention policy explicitly permits it.

- What to summarise — Completed task outputs, multi-step traces longer than one inference window, and any external content (papers, docs, threads) queried more than once. The Curator’s summarization pass is where token bloat dies.

- What to keep raw — Hard constraints, compliance-relevant facts (regulatory rulings, dated commitments), error messages with stack traces (lossy summarization here is how regressions get reintroduced silently), and identifiers (issue numbers, commit SHAs, user IDs) that any downstream join depends on.

- What must require human approval — Any agent action with externally visible blast radius: production deploys, public-facing writes (PRs, emails, customer messages), database mutations that aren’t sandboxed, schema changes, financial transactions. Memory writes themselves typically don’t—they should be reversible by definition.

- What metrics to monitor — Context precision, context recall, token-reduction delta after enabling the Curator, sustained autonomy duration, Curator latency overhead, and knowledge-graph drift (typed-edge contradictions per week as a coherence proxy). The next section unpacks the three that matter most.

Once these decisions are committed, the system becomes measurable—which is the only honest way to justify the compute it consumes.

Measuring the ROI of Memory Pruning

- Prompt Tokens

- -95%

- Context Precision

- +80%

- Autonomy Duration

- 10x

consecutive loops

Building the rigorous write-path discipline described above requires real effort and real compute. It only pays off if you can quantify the return—which means treating the Curator as a measurable, iterative system, not an architectural article of faith.

Every background cycle the Curator runs consumes tokens and API time. That expenditure needs to show up downstream: shorter prompts fed to worker agents, fewer hallucinations, fewer costly human resets. The most effective way to make this trade-off legible is through two metrics borrowed directly from information retrieval: Context Precision and Context Recall.

Context Precision measures the fraction of retrieved context actually useful to the task at hand. Low precision means irrelevant memories shoved into every prompt—API tokens burned on noise, attention diluted. Context Recall measures whether the memories that were relevant actually made it into the prompt. Low recall leaves worker agents blind to critical facts, producing hallucinations born from incompleteness rather than from clutter.

A healthy Curator moves both numbers upward simultaneously. It surfaces necessary state and actively suppresses the rest. Optimize for precision alone—via overly aggressive pruning—and recall collapses. Optimize for recall alone—by pulling everything into context—and precision collapses. Holding that tension productively is precisely what the Curator is for.

You don’t need a controlled academic lab to benchmark this. Track a token-reduction metric: monitor before-and-after prompt token counts when shifting from a naive pipeline (raw vector search, full history dump) to a Curator-mediated pipeline (scored, pruned, compacted context). That delta maps directly onto API costs—every major inference provider bills per token. Instrument your prompts, tag each call by pipeline stage, and aggregate over a week. From what I’ve seen in practice, the cost reduction becomes visible within days of deployment.

The hardest metric to fake, though, is sustained autonomy duration: the number of consecutive execution loops a worker agent successfully completes without hallucinating, hitting a context-length ceiling, or requiring a human developer to intervene and reset its state. This is the ultimate functional test of whether your memory architecture actually works in production. A worker agent that loses coherence after three complex reasoning loops has a broken Curator. A worker that runs forty loops against a live, changing knowledge base while maintaining consistent grounding has a working one. Autonomy duration isn’t a vanity metric—it directly determines the practical ceiling on what agentic workflows can safely automate without human supervision.

Together, Context Precision, Context Recall, and sustained autonomy duration form a minimal viable evaluation harness for any production Curator. None require exotic testing infrastructure. They only require rigorous instrumentation discipline.

Foundation model capabilities are converging fast. Inference pricing keeps falling. Context windows expand uniformly across providers. Reasoning quality narrows. The one variable that doesn’t commoditize is curated state—an organization’s uniquely accumulated, pruned, and structured operational memory.

The team that builds a rigorous Curator today isn’t just trimming next month’s API bills. It’s compounding a proprietary knowledge asset that appreciates with every executed agent loop. The bet: models commoditize toward interchangeable infrastructure. Memory becomes the durable product. That’s the architectural bet worth making now—before the rest of the industry makes it by default.

References

- Liu, N. F., Lin, K., Hewitt, J., Paranjape, A., Bevilacqua, M., Petroni, F., & Liang, P.. (2024). Lost in the Middle: How Language Models Use Long Contexts. Transactions of the Association for Computational Linguistics. https://doi.org/10.1162/tacl_a_00638

- Long, L., Yang, R., Huang, Y., Hui, D., Zhou, A., & Yang, J.. (2025). SlimInfer: Accelerating Long-Context LLM Inference via Dynamic Token Pruning. arXiv. https://doi.org/10.48550/arXiv.2508.06447

- Deng, Y., You, Z., Xiang, L., Li, Q., Yuan, P., Hong, Z., Zheng, Y., Li, W., Li, R., Liu, H., Mouratidis, K., Yiu, M. L., Li, H., Shen, Q., Mao, R., & Tang, B.. (2025). AlayaDB: The Data Foundation for Efficient and Effective Long-context LLM Inference. Companion of the 2025 International Conference on Management of Data. https://doi.org/10.1145/3722212.3724428

- Papadimitriou, I., Gialampoukidis, I., Vrochidis, S., & Kompatsiaris, I.. (2024). RAG Playground: A Framework for Systematic Evaluation of Retrieval Strategies and Prompt Engineering in RAG Systems. arXiv. https://doi.org/10.48550/arXiv.2412.12322

- Sarin, S., Singh, L., Sarmah, B., & Mehta, D.. (2025). Memoria: A Scalable Agentic Memory Framework for Personalized Conversational AI. arXiv. https://doi.org/10.48550/arXiv.2512.12686

- Huang, W. C., Zhang, W., Liang, Y., Bei, Y., Chen, Y., Feng, T., Pan, X., Tan, Z., Wang, Y., Wei, T., Wu, S., Xu, R., Yang, L., Yang, R., Yang, W., Yeh, C. Y., Zhang, H., Zhang, H., Zhu, S., Zou, H. P., Zhao, W., Wang, S., Xu, W., Ke, Z., Hui, Z., Li, D., Wu, Y., He, L., Wang, C., Xu, X., Huang, B., Tan, J., Heinecke, S., Wang, H., Xiong, C., Metwally, A. A., Yan, J., Lee, C. Y., Zeng, H., Xia, Y., Wei, X., Payani, A., Wang, Y., Ma, H., Wang, W., Wang, C., Zhang, Y., Wang, X., Zhang, Y., You, J., Tong, H., Luo, X., Liu, X., Sun, Y., Wang, W., McAuley, J., Zou, J., Han, J., Yu, P. S., & Shu, K.. (2026). Rethinking Memory Mechanisms of Foundation Agents in the Second Half: A Survey. arXiv. https://doi.org/10.48550/arXiv.2602.06052

- Bousetouane, F.. (2026). AI Agents Need Memory Control Over More Context. arXiv. https://doi.org/10.48550/arXiv.2601.11653

- Yang, M., Huang, E., Zhang, L., Surdeanu, M., Wang, W., & Pan, L.. (2025). How Is LLM Reasoning Distracted by Irrelevant Context? An Analysis Using a Controlled Benchmark. arXiv. https://doi.org/10.48550/arXiv.2505.18761

- Verma, N.. (2026). Active Context Compression: Autonomous Memory Management in LLM Agents. arXiv. https://doi.org/10.48550/arXiv.2601.07190

- Li, X., Lyu, T., Yang, Y., Shan, L., Yang, S., Zhang, L., Huang, Z., Liu, Q., & Li, Y.. (2026). Escaping the Context Bottleneck: Active Context Curation for LLM Agents via Reinforcement Learning. arXiv. https://doi.org/10.48550/arXiv.2604.11462

- Kerestecioglu, D., Robsky, A., Vasters, C., Sharma, A., & Kesselman, Y.. (2026). Human-Inspired Memory Architecture for LLM Agents. arXiv. https://doi.org/10.48550/arXiv.2605.08538

- Edge, D., Trinh, H., Cheng, N., Bradley, J., Chao, A., Mody, A., Truitt, S., Metropolitansky, D., Ness, R. O., & Larson, J.. (2024). From Local to Global: A Graph RAG Approach to Query-Focused Summarization. arXiv. https://doi.org/10.48550/arXiv.2404.16130